First Published 22nd April, 2026

The quality of your website is harming your business. Doing the simple things well is a slightly boring but effective

strategy for long-term growth by ensuring that your site works well for the maximum number of users (human and non-human). When I talk about quality here, I don't mean polish or perfection. I mean systems that are reliable, understandable, and deliver consistent outcomes. Ultimately, you are responsible for the quality of your site. In this blog I am hoping to give you the understanding and

motivation to implement change and elevate it above the very low bar set by the vast majority of websites. Quality is not a short term topic, it takes consistent discipline over years and requires long-term strategic thinking. Let's have a conversation about how I can help you build quality into your roadmap,

email me at hello@stuart-mcmillan.com In late 2025 OpenAI announced that you would be able to shop directly in ChatGPT, with their specialised agent completing

purchases on your behalf. And then, in less than six months later, they announced a change in strategy: users would be directed

to the retailers website to complete the transaction. Why this sea-change in direction? Basically, the shopping agent found it too difficult to navigate most sites and proceed

through the purchase funnel. Sites had confusing options, unclear navigation and in many cases were simply broken.

Humans,it seems, are significantly more fault tolerant than the smartest

digital assistant on the planet. Or at least some humans are.

Quick Summary

Intro

The Symptoms Of The Problem

The Most Human of Humans

27 and a half years ago, in October 1998, UK retail changed forever. Amazon launched its first ever international market.

Lets take the 20-24 year old cohort that existed when Amazon arrived in the UK, there were 3.6 million of them. Approximately 60% of them had a prescription glasses or contact lenses.

In those 27 years since we all became hooked on Prime deliveries, the percentage needing glasses will have gone up to 84%, which is 900k more. This is no surprise, our vision deteriorates as we get older. In that same time period, the percentage of the population over 65 has gone from 16% to 20%. More than 2 million people in the UK live with significant sight loss.

Contrast this with the digital provision on offer for those with sight issues, it is poor fare at best. Accessibility, if considered at all is usually a token gesture, ticking a compliance box. Even the Royal Institute for the Blind has significant usability opportunities available for those using screen readers.

This is a long way of saying that there is a significant cohort of people that are being badly neglected by the digital industry.

Fast is Easy

I have laboured the point on this one too many times in the past, but slow websites are still the norm. Thankfully, there has been some progress, pages are loading quicker than ever, with more sites having a Largest Contentful Paint less than 2.5 seconds.

Unfortunately the same cannot be said for Interaction to Next Paint, the metric which measures how responsive a site feels to user input. All too often, every mouse click and every key press feels sluggish. You click to open a menu, it doesn't open instantly, so you click again, inadvertently closing the menu as soon as it appears.

It is frustrating and feels like hard work. Not really the great brand experience you had hoped for.

Cost of fix

So lets just fix it, okay?

Great. Well, if it were only that simple.

If we look at the site responsiveness issue, it is really a JavaScript issue. And the median amount of JavaScript shipped is around 700KB (with the largest 10% coming in at 2MB) which equates to over 150,000 lines of code. Finding the code responsible is not for the faint of heart. Coding boot camps teach you to write code but not debug legacy code.

When it comes to HTML, the code that generates it is obscured by many layers of abstraction which can make it difficult to have fine-grain control over the final HTML.

All these issues are symptom of a single malaise.

Why do we have these issues?

The answer is simple. The TL;DR version is that we have a quality problem. We have always had a quality problem, and it isn't getting better. In the rush to add new features to our websites have compromised on quality, and in so doing we have actually harmed the thing we were trying to improve: the user experience. We need to refocus on doing the little things well.

Quality Quantified

Often, the definitions of concepts like "quality" are highly subjective. Unless you are Conan, who objectively knows what is best in life.

Often, we look at a website and consider whether it contains all the desired features and whether it is visually attractive. But these are highly subjective, one cannot objectively compare two websites and say one is higher quality than the other based on those factors.

The quality of a house is not based on the paint colour choice, but on the how well it is constructed and to some extent how easily it can be maintained. The same is true of websites. Quality is a measure of the code produced: HTML, CSS and JavaScript and to some extent, the average cost of change.

Most website developers would probably focus on the quality of the server-side code, but that is like focusing on the quality of the bricks that were used in the house. It doesn't matter to the end user as long as it doesn't fall down. It is also highly subjective. It is also something I plan to cover in a future blog post.

HTML

The most important quality measure for a website is the quality of the HTML - HTML is the bedrock upon which every user agent builds the user experience and what it builds it out of. And unfortunately the vast majority of websites have HTML with many defects - perhaps greater than 99%.

This begs the question, if most websites have defective HTML, does the quality actually matter? Most websites seem to work ok - don't they?

But think of it this way, if your HTML was really broken it would not work for anyone, the site would be completely non-functional. Fix the most critical issues and you'll have a site that works for those browsing under the most ideal conditions. Fix more issues and the site then becomes more usable to those on older devices and browsers or poor network conditions.

As you continue to improve the quality of the HTML, the site becomes more robust —more accessible— for more users. Your site is more broken than you think, you just haven't tested it thoroughly enough.

The great thing is, there is a standard for HTML and readily available tools to check whether your webpage meets that standard. The crazy thing is that almost nobody checks this!

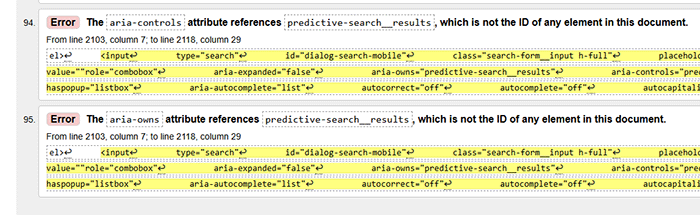

Here is a great example, of all the websites that should have high quality HTML it should be the Royal National Institute for the Blind. Below is a screenshot from the validator, showing a lot of issues, in particular with ARIA which is specifically designed to assist those using screen readers. Whoever built this needs to have a serious think about the quality of work they are doing.

Passing the HTML validator should be table stakes for anyone serious about the quality of their website. But you don't just need to settle for table stakes, you can take your HTML from good (passes validation) to great. The validator won't tell you whether your HTML matches your design of the information architecture which you had planned for the page. The validator won't tell you to use semantic elements, or add appropriate ARIA attributes, it will just tell you if you have implemented them badly. However, adding them in (correctly) can add immense value to your HTML. I will cover this in a future blog post.

What of things other than HTML? Let us start with the easy one: CSS

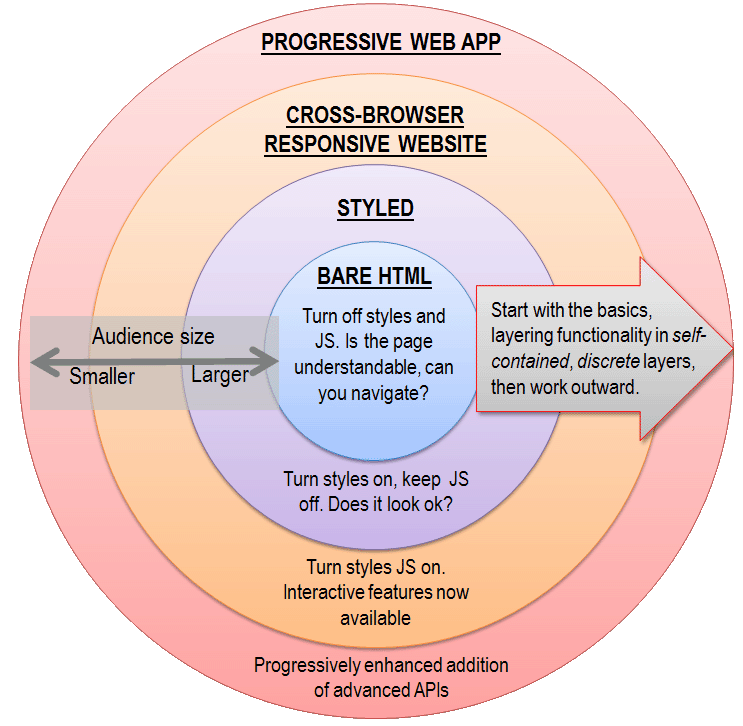

CSS is purely decorative, you should be able to turn off styles and still have a functional document that flows well and could be used. That's not to belittle style and design, it is immensely important to the user's experience, most of them aren't looking for a text-only experience. But it is a layer on top of what should be a semantically marked up document that has a clearly designed information architecture.

As design is highly subjective, for the purposes of this exercise I am going to set aside design as a quality dimension as it is hard to measure. The quality of the CSS can be best assessed by it's size —smaller is better— and it's maintainability. Why size? More CSS takes longer to parse and render, but also the more CSS you use, typically the further away you get from the browser's default styling.

Browser default styles may be boring, but they are generally well thought out, so any time it is possible to use the default styles you should. The more CSS that is applied, the more likely it is that the visual meaning of an element radically departs from it's semantic meaning. Just because you can style an <h4> to look bigger and present as being more important than an <h1> does not mean you should. Actually, you should definitely not do this.

Maintainability is also a key quality dimension for CSS. Do you have a consistent approach for selectors? Do you have a design system that is concise and globally well implemented in CSS? Could you drop in a reasonably experienced developer and they could easily implement non-breaking changes? Have you eliminated all redundant CSS?

CSS can also be checked for validity, using the W3C CSS validator.

The tougher nut is JavaScript.

Like CSS, less is more. The perfect page would rely entirely on native browser functionality, so have no need for any JS, just HTML & CSS.

Why would this be the perfect page?Well, there are many good reasons but let's start with three:

- JavaScript processing takes a lot more time and effort than processing HTML & CSS. That effort slows down your user experience, but it also slows down the experience for non-human users. For example, has long been the case that even though Google has quickly indexed your webpage it can take days to render the page and, if your page is highly dependant on JS, that initial indexation will be incomplete. AI agents are also hit by the additional processing time and effort required.

- JavaScript introduces an additional point of failure into your website. Browsers are relatively fault tolerant of minor faults in HTML (see earlier comment on this), however one badly written line of JS could potentially break the whole page. How many times have you heard "try a different browser or device"? This is most likely a JS issue.

- JavaScript adds complexity to your website. It is a whole other language to maintain, creating highly complex and interdependent files. But more than this, too often it is used to solve problems that would be better solved other ways. Tricky to alter the page template in server-side code? Never mind, we'll just fix it using JavaScript! It has become both the pen and the correction fluid.

The fact is that, for core functionality on the vast majority of webpages, JavaScript should not be necessary. There are many obvious exceptions to this, Google Sheets wouldn't be much fun without JavaScript, for example. But the core journey on every ecommerce website should be usable without JavaScript. That's not to say you can't have any interactivity (where would we be without a mega-menu, for example), you might be amazed at what you can achieve with CSS.

But the unfortunate reality is that JavaScript has been applied by the bucket-load.

But I digress. What is our measure of quality for JavaScript?It boils down to: is there as little of it as possible, and does it execute without error?

So how did we get here?

I think there are three main reasons, one is history, one bottom-up, the other top-down.

History

Originally, the web was built around documents —think early sites and even standards shaped by the World Wide Web Consortium. HTML was the core, with CSS for styling and minimal scripting. However, even back then quality wasn't amazing, everything was being built in Dreamweaver, in table based layouts and everything was being hacked about to try and create some sort of experience. The browsers were unstable and inconsistent.

Then companies like Google and Facebook started building highly interactive, app-like experiences in the browser. Gmail was a turning point. Suddenly, the browser wasn't just for reading—it was for running software. That required far more client-side logic, and JavaScript became the default tool.

There was a mindset change in those who built websites from "we need to add a bit of JavaScript to do this" to "this is so cool, we can do this in JavaScript, so let's!" I know, I have been there and done that.

Developers (and I would partly count myself among you) are like magpies, love the shiny new thing. Developers with any experience love DRY (Don't Repeat Yourself), and look to abstract anything that seems repetitive and creating systems to notionally solve some sort of scaling problem.

We moved away from writing HTML to writing web applications.

The Developer Experience

As website and web application complexity increased, so did the maintenance burden. Developers further walked down the path of abstraction and systemisation, and we saw the introduction of frameworks like React.

These frameworks changed how people think about building UIs. Instead of writing HTML as a primary artifact, developers started writing components that generate HTML.

The frameworks have prioritised the developer experience over the user experience. This is unacceptable.

If you are no longer responsible for creating a page of HTML, just a component on that page, you stop caring about the quality of the page. And a well written document needs considered as a whole. Users load an entire page, not just the individual components.

See no Evil, Hear no Evil.

All of the above being said, I ultimately think the real responsibility lies with those who own/manage the websites.

Quality was never asked for, never defined and therefore never delivered.

It is easy to say "that's not my job I, I expect the developers to deliver good quality", however that is a poor excuse when it is the user experience at stake. Mostly, all you needed to do was to specify that the website had to be accessible and then verify that was the case. Accessibility would have gotten you 90% the way there. But instead you ignored it as a non-issue.

How do we fix this?

I think there are three gaps to fix: The education gap, the expectation gap and the specification gap.

The Education Gap

There are actually two eduction gaps.

Firstly those on the "business" side of the website. They need to better understand how websites work —for all users.

That is not say they need to know how to write good HTML (although that would be nice), but they should at least know (and believe) that good markup matters. I would say that the best shortcut to this would be to try using the website using a screen reader, either the build in narration that is part of the operating system or download NVDA for free.

The second gap is within the developer community. They need to educate themselves on what good looks like. And that does not mean just ticking a compliance box. Running testing tools and passing is not good enough. You need to take a step back and understand the principles of good HTML, CSS & JS and ask whether the built solution is true to those principles.

Let me give you an example that should speak to both groups. In this rather lengthy blog post, I have broken the main subjects into their own <section>. Each section has an aria-label (basically a title for that section) so that screen reader users will hear an announcement per section as they navigate through the page (for NVDA users, they press "d" to move between landmarks). The document would be perfectly valid without these section elements and their aria-labels, however with these the in-page navigation is much more usable and understandable. It is also semantically richer, which should be useful for non-human users. It's not compliance; it is understanding and applying the principles of information architecture and semantic markup.

The Expectation Gap

Once you are educated you are empowered: start to change your expectations on what should be delivered.

Developers: embrace HTML. You love detail and trust me, it is really satisfying to look at the webpage source in the browser and see a thing of beauty. You have a real opportunity to make life better for a whole range of users. The code that appears in the browser is what defines you to the outside world. Be the hero that Gotham needs.

Website owners: change your expectations about what good looks like. Do not be accepting of anything but good quality. As Ronald Reagan used to say: "trust, but verify".

If your website is delivered by an external partner and they cannot meet these quality standards you should be looking for a new technology partner. Make quality of implementation part of the vetting process. Do they take accessibility seriously on their client websites, do they on their own website?

The Specification Gap

Once you have changed your expectations, you need to codify that in a way that clearly communicates to those you work with that only good quality is acceptable. There is nothing magical about good quality HTML, CSS & JavaScript, sometimes all you need to do is ask for it.

If you are building/rebuilding your website then you have the best opportunity to hit your new quality standards. But you need to bake it into the specification process. If building it with compliant HTML is problematic/expensive, you need to ask why. This is surely an indicator that something is fundamentally wrong. Have you chosen the wrong technology or the wrong partner?

If you have an already established site, which has a myriad of issues and rebuilding is not in the plan, then you only have one option. Every project must move you further and further towards quality. You may have projects which are solely dedicated to resolving quality issues, however the majority will probably be business-as-usual or new features. It is vital that your new quality standards are a part of each and every one of these projects. It is a hard road to walk, but step-by-step you will improve.

Below is a simple framework of how I think websites should be constructed, at least conceptually. This should be one of the guiding principles of your quality framework.

Closing Thoughts

This isn't some sort of Pirsig-esque philosophical position. You have three big reasons for addressing this:

- The higher the underlying quality of your website the more users (human or otherwise) that can easily and effectively use it. That should be positive for your business metrics.

- Quality can be a differentiator. Most of your competitor websites have poor quality, so set yourself apart and above.

- And perhaps most importantly to me: it is the right thing to do. Does it sit okay with you that a large number of people cannot use your website due to their sight issues? Your lack of action is discrimination, which nobody should be comfortable with.

The quality I'm asking for is not ground-breaking. Success often comes from doing the little things well. Businesses often under-appreciate the fact that users care more about a website that just works than additional features. Well executed simplicity often is the best strategy.